Poll: How Much Do You Earn From Deepseek?

페이지 정보

작성자 Oma 작성일25-02-01 12:28 조회5회 댓글0건관련링크

본문

DeepSeek presents a range of options tailored to our clients’ precise objectives. Available now on Hugging Face, the mannequin offers customers seamless access by way of net and API, and it seems to be probably the most advanced giant language model (LLMs) currently out there within the open-supply landscape, based on observations and tests from third-party researchers. Applications: Stable Diffusion XL Base 1.Zero (SDXL) gives various purposes, including idea artwork for media, graphic design for promoting, educational and analysis visuals, and private creative exploration. Applications: AI writing help, story era, code completion, concept artwork creation, and more. Applications: Its applications are broad, ranging from advanced pure language processing, personalised content material recommendations, to advanced problem-fixing in varied domains like finance, healthcare, and technology. "Our work demonstrates that, with rigorous analysis mechanisms like Lean, it is feasible to synthesize large-scale, excessive-quality data. The excessive-high quality examples were then passed to the DeepSeek-Prover model, which tried to generate proofs for them. So if you concentrate on mixture of specialists, for those who look on the Mistral MoE mannequin, which is 8x7 billion parameters, heads, you need about 80 gigabytes of VRAM to run it, which is the most important H100 out there. The opposite instance you can think of is Anthropic.

DeepSeek presents a range of options tailored to our clients’ precise objectives. Available now on Hugging Face, the mannequin offers customers seamless access by way of net and API, and it seems to be probably the most advanced giant language model (LLMs) currently out there within the open-supply landscape, based on observations and tests from third-party researchers. Applications: Stable Diffusion XL Base 1.Zero (SDXL) gives various purposes, including idea artwork for media, graphic design for promoting, educational and analysis visuals, and private creative exploration. Applications: AI writing help, story era, code completion, concept artwork creation, and more. Applications: Its applications are broad, ranging from advanced pure language processing, personalised content material recommendations, to advanced problem-fixing in varied domains like finance, healthcare, and technology. "Our work demonstrates that, with rigorous analysis mechanisms like Lean, it is feasible to synthesize large-scale, excessive-quality data. The excessive-high quality examples were then passed to the DeepSeek-Prover model, which tried to generate proofs for them. So if you concentrate on mixture of specialists, for those who look on the Mistral MoE mannequin, which is 8x7 billion parameters, heads, you need about 80 gigabytes of VRAM to run it, which is the most important H100 out there. The opposite instance you can think of is Anthropic.

"It’s plausible to me that they will prepare a mannequin with $6m," Domingos added. Having covered AI breakthroughs, new LLM model launches, and skilled opinions, we ship insightful and engaging content material that keeps readers knowledgeable and intrigued. To make sure a good assessment of DeepSeek LLM 67B Chat, the builders introduced recent drawback sets. AIMO has introduced a collection of progress prizes. This method permits for extra specialised, correct, and context-conscious responses, and units a new commonplace in dealing with multi-faceted AI challenges. As we embrace these advancements, it’s very important to strategy them with an eye fixed in the direction of ethical issues and inclusivity, guaranteeing a future where AI technology augments human potential and aligns with our collective values. Jordan Schneider: Yeah, it’s been an interesting experience for them, betting the home on this, solely to be upstaged by a handful of startups that have raised like a hundred million dollars. Jordan Schneider: What’s fascinating is you’ve seen an identical dynamic the place the established companies have struggled relative to the startups where we had a Google was sitting on their fingers for a while, and the identical thing with Baidu of simply not fairly getting to the place the impartial labs were.

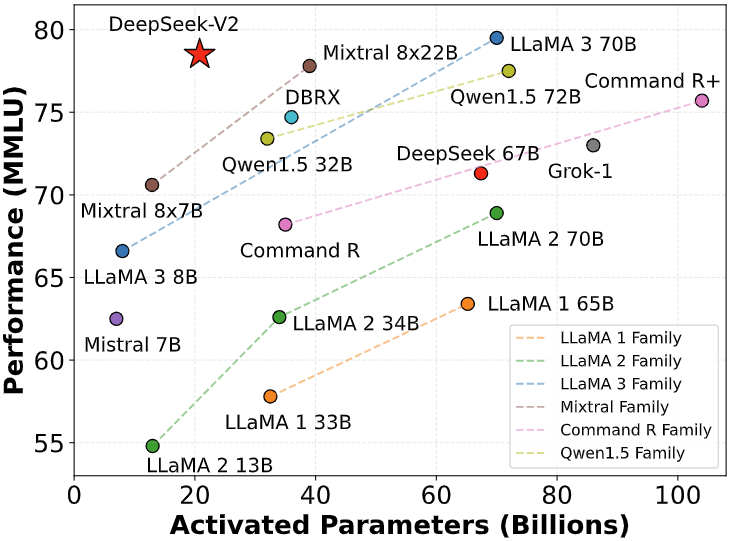

The success of INTELLECT-1 tells us that some people in the world really need a counterbalance to the centralized business of right this moment - and now they've the know-how to make this vision actuality. Recently announced for our Free and Pro customers, DeepSeek-V2 is now the advisable default model for Enterprise clients too. We suggest self-hosted clients make this modification after they update. Cloud clients will see these default models appear when their instance is updated. For Feed-Forward Networks (FFNs), we adopt DeepSeekMoE architecture, a high-performance MoE structure that permits training stronger models at decrease costs. 기존의 MoE 아키텍처는 게이팅 메커니즘 (Sparse Gating)을 사용해서 각각의 입력에 가장 관련성이 높은 전문가 모델을 선택하는 방식으로 여러 전문가 모델 간에 작업을 분할합니다. ‘공유 전문가’는 위에 설명한 라우터의 결정에 상관없이 ‘항상 활성화’되는 특정한 전문가를 말하는데요, 여러 가지의 작업에 필요할 수 있는 ‘공통 지식’을 처리합니다. 하지만 곧 ‘벤치마크’가 목적이 아니라 ‘근본적인 도전 과제’를 해결하겠다는 방향으로 전환했고, 이 결정이 결실을 맺어 현재 DeepSeek LLM, DeepSeekMoE, DeepSeekMath, DeepSeek-VL, DeepSeek-V2, DeepSeek-Coder-V2, DeepSeek-Prover-V1.5 등 다양한 용도에 활용할 수 있는 최고 수준의 모델들을 빠르게 연이어 출시했습니다. 현재 출시한 모델들 중 가장 인기있다고 할 수 있는 DeepSeek-Coder-V2는 코딩 작업에서 최고 수준의 성능과 비용 경쟁력을 보여주고 있고, Ollama와 함께 실행할 수 있어서 인디 개발자나 엔지니어들에게 아주 매력적인 옵션입니다.

DeepSeek-Coder-V2 모델의 특별한 기능 중 하나가 바로 ‘코드의 누락된 부분을 채워준다’는 건데요. 글을 시작하면서 말씀드린 것처럼, DeepSeek이라는 스타트업 자체, 이 회사의 연구 방향과 출시하는 모델의 흐름은 계속해서 주시할 만한 대상이라고 생각합니다. 예를 들어 중간에 누락된 코드가 있는 경우, 이 모델은 주변의 코드를 기반으로 어떤 내용이 빈 곳에 들어가야 하는지 예측할 수 있습니다. DeepSeekMoE는 LLM이 복잡한 작업을 더 잘 처리할 수 있도록 위와 같은 문제를 개선하는 방향으로 설계된 MoE의 고도화된 버전이라고 할 수 있습니다. 이전 버전인 DeepSeek-Coder의 메이저 업그레이드 버전이라고 할 수 있는 DeepSeek-Coder-V2는 이전 버전 대비 더 광범위한 트레이닝 데이터를 사용해서 훈련했고, ‘Fill-In-The-Middle’이라든가 ‘강화학습’ 같은 기법을 결합해서 사이즈는 크지만 높은 효율을 보여주고, 컨텍스트도 더 잘 다루는 모델입니다. 다른 오픈소스 모델은 압도하는 품질 대비 비용 경쟁력이라고 봐야 할 거 같고, 빅테크와 거대 스타트업들에 밀리지 않습니다. 위에서 ‘DeepSeek-Coder-V2가 코딩과 수학 분야에서 GPT4-Turbo를 능가한 최초의 오픈소스 모델’이라고 말씀드렸는데요. 이 Lean four 환경에서 각종 정리의 증명을 하는데 사용할 수 있는 최신 오픈소스 모델이 DeepSeek-Prover-V1.5입니다. The researchers evaluated their model on the Lean 4 miniF2F and FIMO benchmarks, which include a whole lot of mathematical problems. Once they’ve carried out this they do large-scale reinforcement studying coaching, which "focuses on enhancing the model’s reasoning capabilities, notably in reasoning-intensive tasks similar to coding, mathematics, science, and logic reasoning, which involve properly-outlined issues with clear solutions".

In case you have any kind of queries about exactly where in addition to how you can work with ديب سيك, you'll be able to contact us on the web-site.

Warning: Use of undefined constant php - assumed 'php' (this will throw an Error in a future version of PHP) in /data/www/kacu.hbni.co.kr/dev/skin/board/basic/view.skin.php on line 152

댓글목록

등록된 댓글이 없습니다.